Most people do not stop making music ideas because they lack taste. They stop because the distance between imagination and output is still too long. A creator may know the mood, the pacing, the emotional arc, and even a few lyric lines, yet the idea remains trapped between note apps, unfinished voice memos, and software they do not fully want to learn. That is why an AI Music Generator becomes most useful not as a spectacle, but as a reduction of friction. It lets the creative process start where many real ideas begin: with language, mood, and a rough sense of shape.

That change matters more than it first appears. The modern creator is often not trying to become a recording engineer. A marketer may need a short anthem for a campaign. A video editor may need background music that feels specific rather than generic. A solo writer may want to hear whether a lyric idea actually carries emotional weight when sung. In these cases, the question is not whether AI replaces musicianship. The more practical question is whether it helps people hear the first believable version of an idea earlier.

The Real Shift Is Creative Timing

For years, music tools were powerful but front-loaded. You needed software, time, arrangement instincts, and enough patience to translate feeling into production steps. Now the first draft can appear before the technical commitment fully begins.

That changes the timing of judgment. Instead of wondering for hours whether an idea might work, a creator can test a version, listen back, and decide whether the concept deserves more attention. In my observation, this is one of the most valuable parts of AI music tools: they let people evaluate ideas sooner, not merely faster.

Early Feedback Changes Better Decisions

When creators can hear a rough result quickly, they stop overprotecting weak ideas and start developing stronger ones. A moody chorus can be tested. A cinematic instrumental can be checked against a video cut. A lyrical concept can be judged for pacing and emotional clarity. What used to remain theoretical becomes audible enough to refine.

Momentum Often Matters More Than Precision

Many projects die not because the first version is bad, but because nothing exists to react to. The first pass created by AI is often useful precisely because it invites response. It gives creators something to shape, reject, adjust, or rebuild. That is different from the old model, where the blank page and blank timeline demanded too much commitment up front.

How ToMusic Fits Into This New Pattern

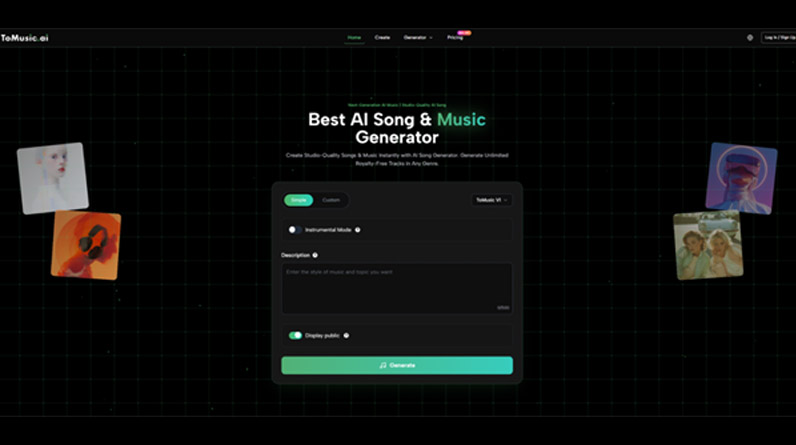

Among the growing field of AI music products, ToMusic stands out less because it tries to dominate every use case and more because it organizes the process in a way many users can understand immediately. The workflow, based on the visible product flow, is not mysterious: choose a model, choose a mode, enter either a music description or lyrics, decide whether you want instrumental output, then generate.

That sounds simple, but simplicity is doing real work here. Instead of overwhelming the user with production abstractions, the platform treats music generation like a structured creative brief. You begin by deciding what kind of outcome you want, not by constructing the entire method from scratch.

Multiple Models Create More Intentional Starting Points

A notable part of the platform is that it presents more than one model version. This matters because users do not all want the same thing. Some want fast idea turnover. Some want longer compositions. Some care more about vocal presence or richer musical structure. A multi-model setup gives the creation process a more deliberate starting point.

Simple And Custom Modes Separate Different Users

The split between Simple mode and Custom mode is practical for a reason. A beginner can describe a mood, genre, or scene in plain language and generate a result. A more hands-on user can move into lyric writing and stronger structural guidance. The product does not force everyone into one creative posture. It allows casual testing and more intentional drafting to live in the same workspace.

Six AI Music Websites Worth Understanding

There are now enough AI music tools on the market that comparison matters. Not every product is built for the same type of creator, and the differences become easier to understand when viewed through use case rather than hype.

1. ToMusic For Flexible Starting Workflows

ToMusic is especially useful for people who want both prompt-based generation and lyric-based song creation in one place. The platform visibly supports model choice, Simple and Custom modes, instrumental generation, and a workflow that feels approachable for fast experimentation.

2. Suno For Quick Full-Song Exploration

Suno is often discussed because it makes full-song creation accessible through fast prompting. It tends to appeal to users who want immediate musical ideas and broad stylistic experimentation without spending much time preparing a production setup.

3. Udio For Prompt-Led Song Drafting

Udio sits in a similar broad category of AI song generation, with an emphasis on turning prompts into complete tracks. For many users, its appeal is the directness of the input-to-output experience.

4. SOUNDRAW For Creator-Oriented Background Music

SOUNDRAW is often more relevant to people who need royalty-focused background music, adjustable structure, and music for ongoing content workflows. It feels closer to a creator utility than a pure songwriting playground.

5. Mubert For Fast Use-Case Music Generation

Mubert is useful when the goal is practical soundtrack generation for videos, podcasts, social clips, or branded content. It leans toward creators who need music aligned to mood, duration, and platform context.

6. AIVA For Style Breadth And Composition Control

AIVA has long appealed to users interested in a broader range of styles and more composition-oriented workflows. It often enters the conversation when creators want AI help but still care about controllable musical direction.

Why ToMusic Often Feels Easier To Start With

A lot of AI tools promise creative freedom, but many still depend on the user already knowing how to frame that freedom. ToMusic feels more accessible because the visible structure does some of that framing for you. The process suggests what decisions matter first: model, mode, prompt or lyrics, instrumental or vocal result.

That may sound basic, but good product design often hides inside ordering. When a platform guides the user through the right sequence, people spend less time figuring out the tool and more time understanding their own intent.

A Lyric Idea Can Become Testable Quickly

One of the more practical advantages is how the platform treats lyrics as a normal input rather than a secondary one. Many creators do not start with a beat or arrangement. They start with words. That is where a lyric-first pathway becomes valuable, because it lets language lead the creative process instead of waiting until the production stage.

Iteration Feels Like Part Of The Design

In my testing of tools in this category, the strongest products are not those that pretend every first result will be perfect. They are the ones that make iteration feel expected and efficient. ToMusic appears designed around that logic. You generate, review, revise, and rerun rather than chasing a fantasy of flawless one-shot output.

How A Real User Might Work Through It

The platform’s actual visible flow is compact enough to describe in a few grounded steps.

Step 1. Select The Model And Format

The user begins by choosing a model and deciding whether the target should be instrumental or song-based. This step matters because it defines the broad creative lane before any content is entered.

Step 2. Choose Simple Or Custom Creation

Next comes a decision about control. Simple mode works for descriptive prompting. Custom mode is better for users who already have lyrics or a more defined idea of the musical direction they want to test.

Step 3. Add Description Or Song Words

The user then enters either a prompt describing mood, genre, and feel, or moves into a more guided Lyrics to Music AI process by supplying original lyrics and section logic. This is where the tool becomes most personally expressive, because the output starts reflecting not just sonic preference but verbal intention.

Step 4. Generate And Improve Through Repetition

After generation, the result can be reviewed and adjusted. If the mood lands but the pacing does not, the prompt can be refined. If the lyrics work but the arrangement feels off, another run may get closer. This is less like hitting a magic button and more like accelerated drafting.

A Simple Comparison Of Product Differences

| Platform | Best Understood As | Main Strength | Typical User Need |

| ToMusic | Prompt and lyric song workspace | Flexible mode and model choices | Fast idea testing with room for control |

| Suno | Quick full-song generator | Immediate creation flow | Rapid music exploration |

| Udio | Prompt-led song maker | Direct song generation | Fast concept-to-song drafting |

| SOUNDRAW | Creator background music tool | Adjustable royalty-focused tracks | Video and content production |

| Mubert | Use-case soundtrack generator | Mood and duration utility | Podcasts, ads, and social media |

| AIVA | Composition-oriented assistant | Style range and deeper musical framing | More deliberate music building |

What This Means For Different Creators

The most important thing is not whether one tool is universally best. It is whether a tool matches the stage of the creative process you are actually in.

For Video Creators

If the need is speed, timing, and fit-to-project utility, AI music tools reduce the usual friction of searching stock libraries or waiting on custom production. The ability to generate something context-aware is often more useful than browsing hundreds of generic tracks.

For Songwriters

Writers with unfinished lyrics can use these tools to evaluate emotional direction earlier. Hearing lines sung or structured against a generated musical backdrop changes how quickly a writer can spot weak phrasing, unclear pacing, or moments that deserve expansion.

For Non-Musicians With Strong Taste

This may be the most interesting category. Many people know what they want emotionally but lack the technical path to build it. AI music tools do not magically give them mastery, but they do give them a usable first layer of expression.

The Limits Still Matter

A realistic view makes the category easier to trust. AI music is useful, but not frictionless in every sense.

Prompt Quality Still Shapes Results

A vague request often leads to a vague result. The more clearly the user describes mood, structure, instrumentation, and intended context, the better the first output tends to be.

Not Every First Pass Will Feel Finished

Sometimes a generation captures the right atmosphere but misses the vocal texture. Sometimes the arrangement works while the pacing drifts. These are normal tradeoffs, not proof of failure. The point is not perfection on demand. The point is faster discovery.

AI Assistance Is Not Full Studio Editing

For creators who want exact manual control over every arrangement detail from the beginning, traditional production tools still matter. AI generation is strongest when it is used as a draft engine, concept accelerator, or creative bridge.

Why This Category Will Keep Growing

The future of AI music may not be defined by who generates the flashiest song in one click. It may be defined by which tools make creative intention easiest to translate, revise, and reuse. That is a more grounded and durable measure of value.

Seen through that lens, ToMusic is part of a broader shift in how music gets started. It helps move the first draft closer to the first idea. And once that gap becomes smaller, more people can participate in music creation without pretending the process has become trivial. The craft remains. What changes is how early the work can begin.