Performance marketing has entered a phase where the “hero creative” is no longer a sustainable strategy. On platforms like Meta and TikTok, the algorithmic appetite for fresh content has shortened the effective lifespan of a static image or video to a matter of days, sometimes even hours, before frequency caps and creative fatigue cause click-through rates (CTR) to plummet and costs per click (CPC) to spike.

The traditional design workflow—where a creative team spends a week conceptualizing and polishing three to five assets—is fundamentally incompatible with this high-velocity reality. To maintain a competitive return on ad spend (ROAS), the modern performance marketer must shift from a production mindset to a systems mindset. The goal is no longer to find one “perfect” ad, but to maintain a living library of visual variations that outpace the speed of audience boredom. This is where multi-model AI pipelines become a commercial necessity rather than a technical novelty.

The Shrinking Lifecycle of the Static Social Ad

The mathematical reality of digital advertising is unforgiving. When an audience sees the same visual hook repeatedly, their cognitive filters begin to exclude it. This creative decay is observable in the data: a steady rise in CPC over a 14-day window as the platform struggles to find new users willing to engage with a stale asset.

In the past, solving this required a massive photography or design budget. You would need multiple sets, different models, varied lighting, and hours of post-production. Most mid-market brands simply couldn’t afford to refresh their entire creative suite twice a month. This led to “creative stagnation,” where brands would keep running underperforming ads simply because they lacked the resources to replace them.

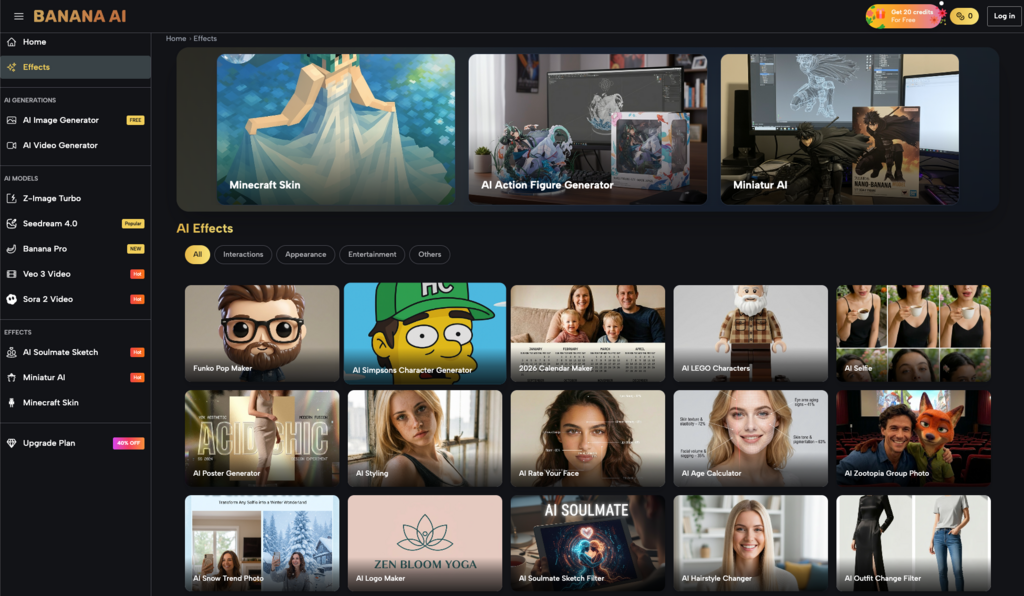

High-velocity iteration changes this dynamic. By utilizing tools like Banana AI, marketers can now decouple the “concept” of an ad from its “execution.” The core marketing hook—the psychological reason someone buys—remains constant, but the visual delivery of that hook can be iterated endlessly. This allows for a “quality at scale” approach where volume serves as the testing ground for quality.

Architecting a Multi-Model Production Stack

Not all AI models are created equal, and a professional workflow should reflect that. One of the common mistakes in AI asset generation is using a single model for every task. This often results in a “visual signature”—a certain texture or lighting style—that becomes predictable and, eventually, invisible to the consumer.

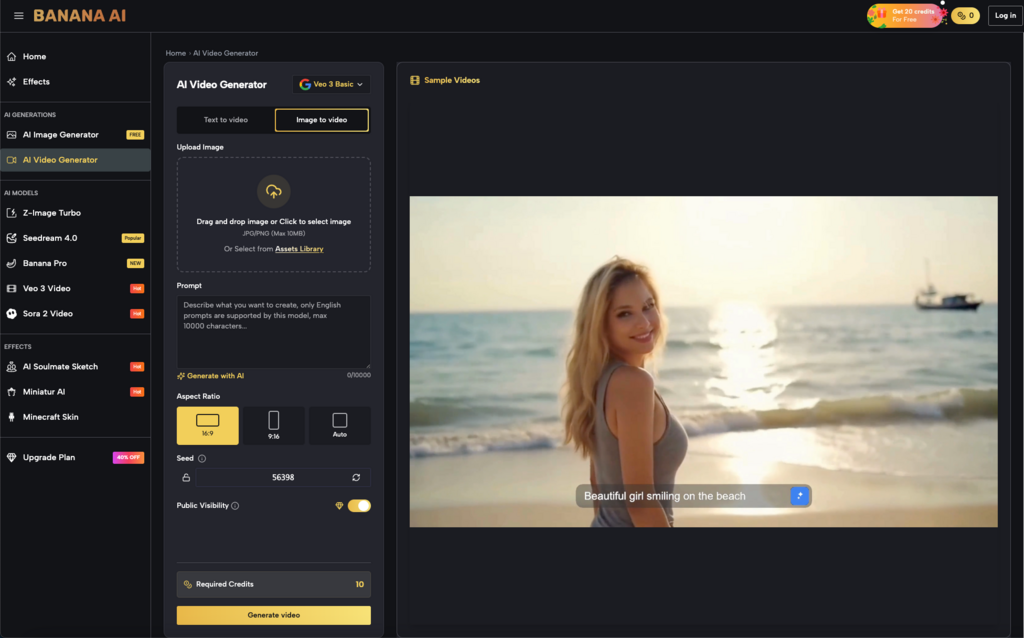

A sophisticated pipeline uses different model architectures for different stages of the creative funnel. For example, within a platform like Banana AI, a creator might utilize a high-speed model like Z-Image Turbo for the initial prototyping phase. This stage isn’t about final polish; it’s about generating 50 different variations of a concept to see which compositions feel the most disruptive in a social feed.

Once a winning composition or color palette is identified through low-stakes testing, the marketer can move to a high-fidelity model such as Seedream 4.0. This model prioritizes texture, lighting nuance, and anatomical correctness, producing assets that are ready for high-spend deployment. By separating the “exploration” phase from the “production” phase, teams can save both time and computational credits, focusing their heavy-duty generation on ideas that have already shown promise in rough drafts.

Practical Iteration: From Image-to-Image to Variable Testing

The most effective way to combat fatigue without losing brand identity is through image-to-image workflows. Instead of writing a text prompt from scratch for every ad, creators can use Banana AI Image to iterate on a base product photo.

Consider a skincare brand. The core product—the bottle—must remain consistent. Using an image-to-image pipeline, the marketer can keep the geometry of the bottle locked while swapping everything else. They can test the product in a minimalist Scandinavian bathroom, on a sun-drenched beach, or against a vibrant, high-contrast neon background.

This modular approach allows for rapid testing of visual variables:

- Background Texture: Does a marble surface convert better than a wooden one?

- Lighting Mood: Does golden hour warmth outperform clinical, high-key studio lighting?

- Color Palettes: Do complementary color schemes (blue and orange) stop the scroll more effectively than monochromatic ones?

It is important to note a current limitation here: while AI is excellent at background and lighting swaps, it still struggles with precise text rendering and exact product label recreation. Most professional workflows involve generating the “environment” via Banana AI Image and then compositing the actual, high-resolution product shot over it in a tool like Photoshop or Canva. Expecting the AI to perfectly recreate a specific logo or fine print on a label often leads to frustration and “uncanny” results that can damage brand trust.

Synchronizing Landing Page Visuals with Ad Variations

The conversion funnel doesn’t end at the click. One of the most overlooked causes of high bounce rates on landing pages is visual disconnect. If a user clicks an ad featuring a product in a tropical setting, but lands on a page with a stark white background, the cognitive friction can be enough to kill the sale.

AI visual tools allow marketers to maintain continuity across the entire journey. For every ad variant produced, a corresponding hero image for the landing page can be generated. This level of personalization was previously impossible for anyone without an enterprise-level creative department.

Furthermore, creators can leverage AI to generate niche-specific social proof assets. If you are targeting marathon runners with one ad set and yoga enthusiasts with another, your landing page visuals can shift to reflect the specific lifestyle imagery relevant to those cohorts. Using Banana AI to create these lifestyle assets—without the need for a $10,000 photoshoot—allows for a level of micro-targeting that significantly improves ROAS.

However, there is a strategic uncertainty to manage here. Over-optimizing visuals for every tiny niche can lead to a fragmented brand identity. There is a delicate balance between “relevance” and “consistency.” A brand that looks completely different to every person who sees it can fail to build long-term recognition and trust.

Strategic Uncertainties and the ‘Uncanny Valley’ in ROAS

While the efficiency gains of AI are indisputable, the performance marketing community is still navigating the “uncanny valley” of generative content. There is a documented phenomenon where hyper-realistic, AI-generated images sometimes perform worse than authentic, slightly imperfect user-generated content (UGC).

Consumers are becoming increasingly sensitive to the “AI look”—the overly smooth skin, the impossible lighting, and the generic perfection. If an ad feels too “generated,” it can trigger a defensive reaction in the viewer, who may associate the visual artificiality with a low-quality product or a potential scam. This is a critical moment of uncertainty: more polish does not always equal more profit. Sometimes, the most effective use of Banana AI is to generate assets that look intentionally grounded and “real” rather than cinematic and spectacular.

Another significant limitation is the “concepting” gap. AI is a world-class executioner, but a mediocre strategist. It cannot tell you why a certain demographic is feeling anxious or what psychological “hook” will solve their problem. The high-level concepting—the insight that drives the creative direction—must still come from human marketers who understand the nuances of the market.

Finally, the legal landscape surrounding AI-generated imagery remains in a state of flux. While many platforms provide terms that allow for commercial use, the question of copyrightability for AI-generated works is still being litigated in various jurisdictions. Marketers should maintain a clear internal record of their production process and be aware that their “unique” AI assets may not have the same legal protections as traditionally commissioned photography.

Conclusion

The shift toward multi-model pipelines and high-velocity iteration is not just a trend; it is a response to the way modern ad platforms function. By utilizing the specific strengths of various models within Banana AI, marketers can move away from the “hero image” trap and build a creative engine that produces fresh, relevant assets at the scale the market demands.

Success in this new era requires a blend of technical tool-savviness and traditional marketing intuition. The tools provide the speed, but the marketer provides the direction. By focusing on modular testing, visual continuity, and a healthy skepticism of “perfection,” creative teams can turn the challenge of creative fatigue into a significant competitive advantage.