There is a subtle shift happening in how creators approach visuals. Instead of asking how to design a perfect image, many are beginning to ask how that image will behave over time. This is where

Image to Video AI becomes relevant—not as a shortcut, but as a different way of thinking about visual output.

The challenge is not creativity. It is a translation. A still image captures intent, but it cannot express progression. Video does, but traditional workflows demand too much time and technical effort. The gap between the two has historically been difficult to bridge.

What is changing now is that this gap can be addressed directly, without requiring users to learn animation or editing systems.

Why Static Design No Longer Defines Final Output

For years, visual creation ended with an image.

Now, that endpoint feels incomplete.

Shift From Composition To Experience

A static image focuses on:

- Balance

- Color harmony

- Framing

A moving image introduces:

- Timing

- Direction

- Energy flow

This changes how creators think about their work.

From Object To Sequence

Instead of designing a single frame, creators begin to think in:

- Entry points

- Transitions

- Exit states

Even simple motion adds narrative structure.

Understanding The Core Mechanism Behind Image Animation

At its core, the system operates on three layers:

Visual Input As Foundation

The image defines:

- What exists in the scene

- Where elements are placed

- How light and texture behave

This foundation is not discarded. It is extended.

Instruction Layer Through Language

The prompt becomes a form of direction.

For example:

- “Slow camera zoom toward subject”

- “Wind gently moving clothing”

- “Character turning head”

These instructions are interpreted, not executed literally.

Temporal Synthesis By The Model

The model fills in:

- Movement between frames

- Depth estimation

- Visual continuity

In my observation, the system prioritizes coherence over exactness, meaning it aims to look believable rather than mechanically precise.

Breaking Down The Real Usage Process

The actual workflow is intentionally simple.

Step 1 Provide The Base Image

Upload a still image in a supported format such as PNG or JPEG.

Step 2 Define Motion Through Prompt

Describe what should happen in the scene, including:

- Subject movement

- Camera behavior

- Emotional tone

Step 3 Generate And Evaluate Output

The system processes the input and produces a video, typically within minutes. The result can then be reviewed, adjusted, or regenerated.

This process replaces complex editing with iterative generation.

Why Camera Movement Becomes A Creative Tool

One of the more interesting aspects is how camera motion influences perception.

Common Motion Types Observed

- Slow zoom for emotional focus

- Side pan for environmental context

- Subtle tilt for depth illusion

These are not manually controlled but emerge from prompt interpretation.

Impact On Viewer Attention

Camera motion can:

- Guide focus

- Create anticipation

- Simulate realism

Even minimal movement changes how the image is perceived.

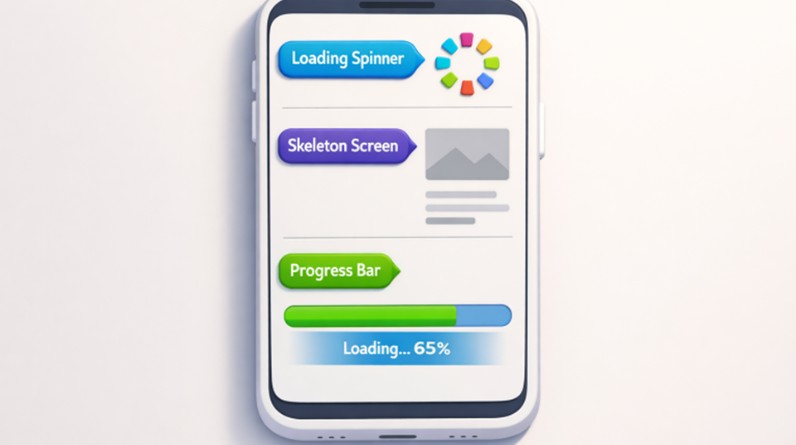

How Preset Effects Simplify Decision Making

The platform also introduces predefined motion categories.

These include:

- Character interactions

- Dance-like sequences

- Stylized animation effects

Instead of building motion from scratch, users can:

- Select a scenario

- Adjust prompts

- Iterate quickly

This reduces cognitive load, especially for non-experts.

Comparing Different Creation Approaches

To better understand its role, consider this comparison:

| Method | Input Type | Control Style | Time Investment | Output Flexibility |

| Image-to-video | Single image + prompt | Interpretive | Low | Medium |

| Video editing | Raw footage | Manual | High | High |

| Animation software | Assets + rigging | Technical | Very high | Very high |

The system sits between simplicity and capability.

Where This Approach Is Most Useful

From practical use, several patterns emerge.

Rapid Content Iteration

- Testing multiple visual directions quickly

- Generating variations without redesigning assets

Enhancing Existing Visual Libraries

- Reusing old images

- Adding motion without reshooting

Lightweight Storytelling

- Introducing subtle narrative progression

- Creating mood without full production pipelines

Why Results Depend On Interpretation Rather Than Precision

A key characteristic is that outputs are not deterministic.

Prompt Interpretation Variability

The same instruction may produce:

- Slightly different motion paths

- Different pacing

- Different emphasis on elements

Image Quality Influence

High-quality inputs tend to:

- Produce more stable outputs

- Maintain subject consistency better

Iteration As Part Of The Process

In practice:

- First result is rarely final

- Refinement happens through prompt adjustments

- Multiple generations are expected

What This Means For Creative Workflow Design

The workflow shifts from:

Building → Editing → Finalizing

to:

Describing → Generating → Selecting

This changes the role of the creator from executor to director.

Where Structured Video Output Becomes Important

As projects move from experimentation to production, tools like Photo to Video begin to play a more consistent role in turning selected visuals into repeatable motion outputs that align with a broader content strategy.

This stage focuses less on exploration and more on consistency.

Limitations That Define Its Current Boundaries

Despite its flexibility, there are clear constraints.

Limited Fine Control

You cannot precisely define:

- Exact motion curves

- Frame-level adjustments

- Detailed choreography

Inconsistent Outputs Across Runs

Even with identical inputs:

- Results may vary

- Some artifacts may appear

- Motion smoothness can differ

Complex Interactions Remain Challenging

Scenes involving:

- Multiple subjects

- Fast interactions

- Detailed actions

are harder to stabilize.

Why These Limitations Do Not Reduce Its Relevance

These systems are not designed to replace high-end production tools.

They are designed to:

- Lower the barrier to motion creation

- Accelerate idea testing

- Expand what individuals can produce

In that context, their value lies in enabling new types of workflows rather than perfecting existing ones.

How Visual Thinking Continues To Evolve

The most important shift is conceptual.

Creators are no longer limited by:

- Static endpoints

- Tool complexity

- Production bottlenecks

Instead, they can think in terms of:

- Motion-first design

- Prompt-driven direction

- Iterative visual exploration

This is not just a new tool category. It is a new layer in how visual ideas are expressed.